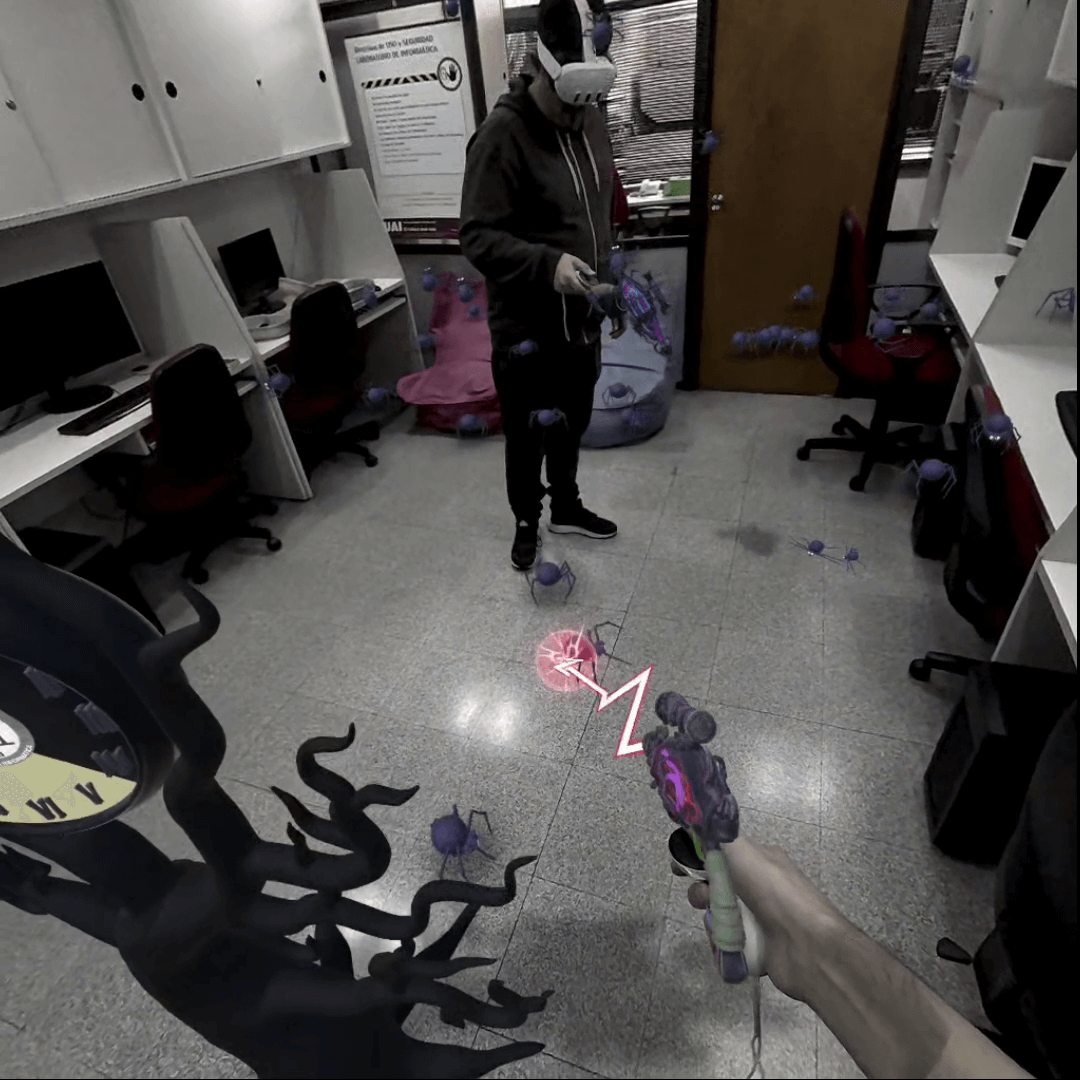

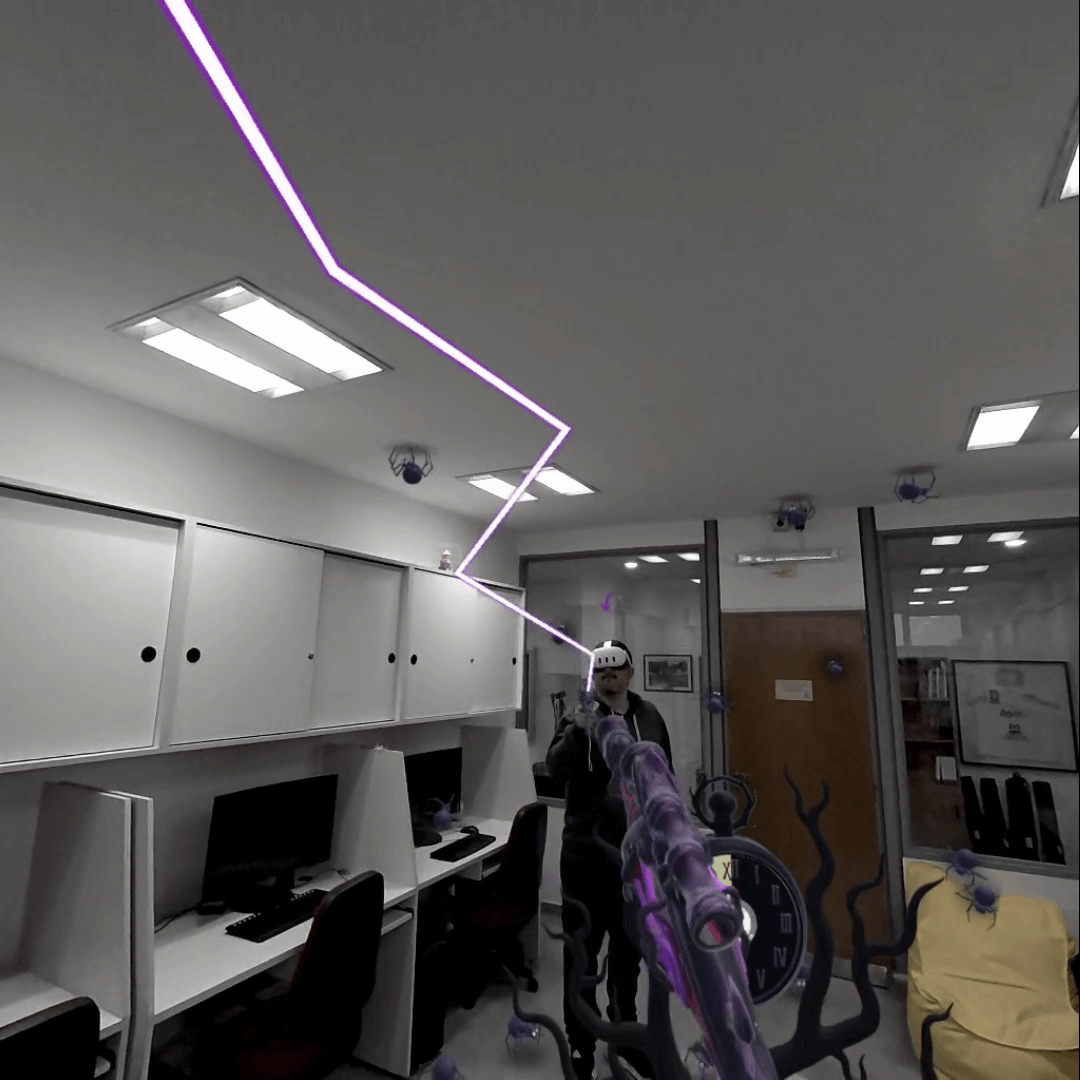

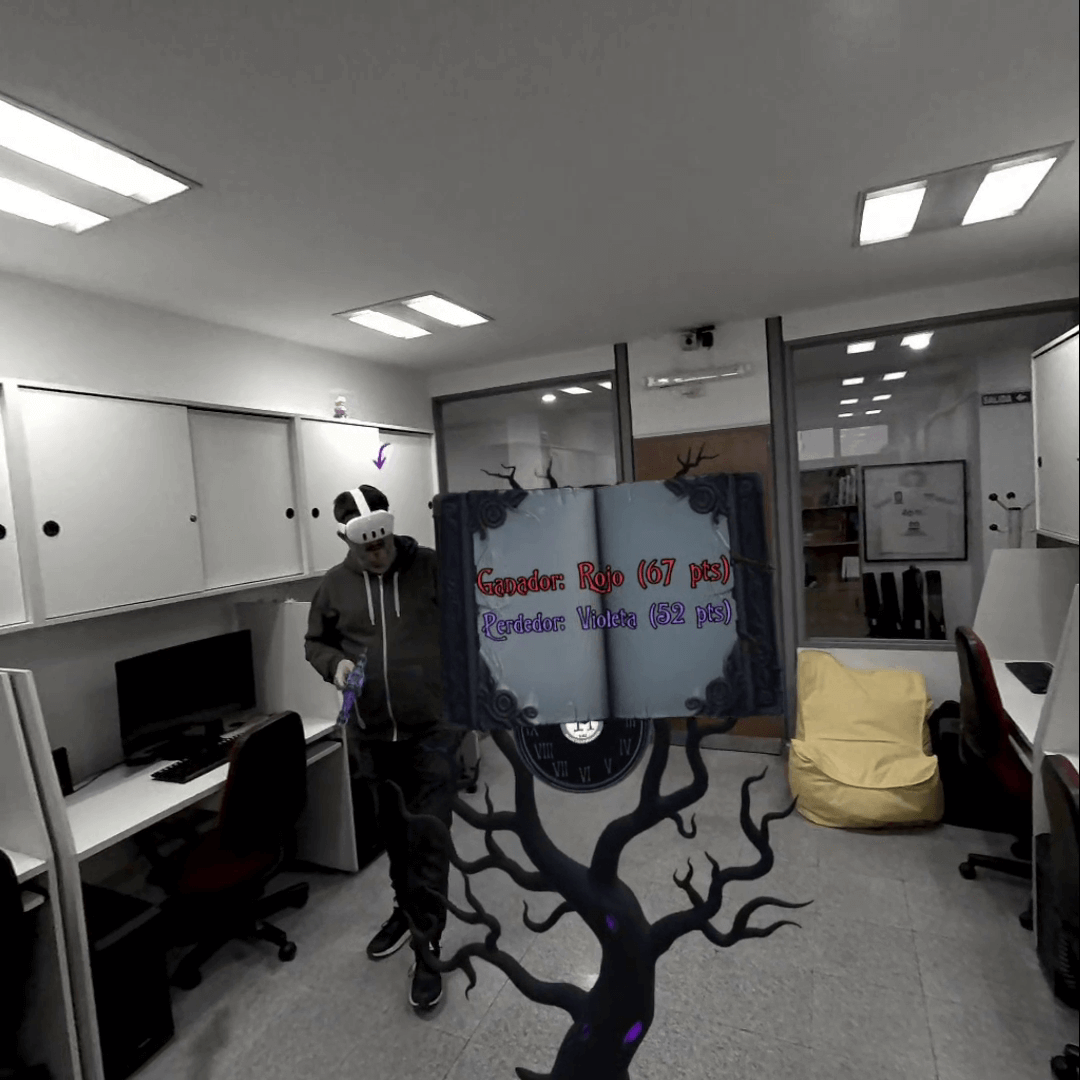

Spider Hunt

Mixed reality 1v1 multiplayer game where two players compete to eliminate as many spiders as possible within a limited time. Each player controls a lightning blaster, aiming and shooting within a shared physical space. Spiders spawn dynamically around the real environment — on walls, floors, ceilings, and even furniture — blending real and virtual elements in real time.

The process

The project began in early 2025 at CAETI LIVE, when I set out to create a mixed reality multiplayer experience that would run entirely over LAN — with no need for an internet connection. As I dove deeper into research and testing, I quickly realized that achieving this goal was more challenging than I initially expected.

At first, I began development using the Meta SDK. However, the version available at the time relied on Unity Netcode for GameObjects’ Relay and Lobby services, which required internet access and introduced noticeable latency between players. That lag made the gameplay feel far from the fast, local experience I wanted, so I decided to move away from that approach.

Next, I experimented with the XR Interaction Toolkit, building upon a community-made VR LAN template created by italovs and adapting it for mixed reality. This setup worked better for local data exchange, but I soon discovered another limitation: even though player data was shared correctly over LAN, Meta’s spatial tracking features still required an internet connection. Around this time, I was also struggling to properly synchronize players and shared content through anchors — making consistent co-location quite difficult.

After some time, as Meta’s SDK evolved and introduced key improvements, I decided to revisit their solution. The newer version finally supported automatic device discovery over LAN, enabling headsets on the same network to exchange game data without relying on Unity’s cloud services. It also maintained compatibility with Meta’s spatial features when needed.

One of the most impactful updates was the ability to share the host’s room scan across all connected devices — meaning only the host needs to scan the environment once. This shared spatial mesh ensures that every player perceives objects, surfaces, and collisions in the same physical positions, providing precise co-location without redundant scanning.

In the end, I chose to keep working with this framework because it offers a stable and consistent foundation for mixed reality multiplayer setups. As of October 2025, I still consider it the most practical and robust solution for this kind of experience.

Screenshots